“Some Statistics” about sizeof(cat)’s feed list

Now, if only there was someone willing to generate some statistics on the contents of the OPML file, like how many Wordpress or Hugo websites, author nationality, what web server software, how many are behind Cancerflare, etc.

In direct response to the Personal feedlist and update post

This took me about 4 hours. The easy part is fingerprinting servers. The hard part is cleaning the resulting data which is always 95% garbage.

This was written for sizeof(cat)’s “guest posting”. It is mirrored to 0x19 for multitirectional trafic flow. Thanks for hosting! Link to the original post

0x19# cat $(which cat) | wc -c

149856

0x19# ls -lh /bin/cat

-r-xr-xr-x 1 root bin 149856 Mar 20 15:16 /bin/cat

Using Lainchan Statistics Script

Refer to my post about the various server software used by the members of the Lainchan Webring for more information.

1. sed, grep, awk the sizeofcat.opml file to get a newline delimited list of urls

Baby’s first shell spaghetti. We are better than copy+pasting.

2. Grab http headers with a modified version of my previous lainchan script

The modified version of the script grabs http headers and checks to see if the http server is sending the Server: header. It outputs .csv and looks like this:

<?php

$f = file_get_contents("sites.txt");

$sites = array_filter(explode("\n", $f));

printf("\"URL\",\"Status\",\"Server\",\"Redirect\"\n");

foreach($sites as $s){

$status = null;

$server = null;

$location = null;

$h = get_headers($s);

if(!$h || count($h) === 0){

$status = "offline";

} else {

for($i=0; $i<count($h); $i++){

if(str_starts_with($h[$i], "HTTP")){

$status = $h[$i];

}

if(str_starts_with($h[$i], "Server:")){

$server = $h[$i];

}

if(str_starts_with($h[$i], "Location:")){

$location = $h[$i];

}

}

}

printf("\"%s\",\"%s\",\"%s\",\"%s\"\n", $s, $status, $server, $location);

}

?>

3. Process the csv output from the php script

I could have done more in php but I would rather do it in shell. It’s faster for me to think in shell.

0x19# cat http-headers | tail -n +2 | wc -l

134

0x19# cat http-headers | tail -n +2 | awk -F',' '{print $3;}' | sed -e s/\"//g -e s/Server:\ //g -e s/[[:digit:]].*$//g | tr -d '/' | sort | uniq -c | sort -r

34 nginx

24 cloudflare

22 Apache

8 GitHub.com

5 Netlify

4 openresty

3 SucuriCloudproxy

3 OpenBSD httpd

3 Caddy

2 Squarespace

2 AmazonS3

1 nginx-rc

1 neocities

1 lighttpd

1 lantern

1 Vercel

1 Pepyaka

1 Microsoft-IIS

1 GSE

1 CloudFront

1 BunnyCDN-SIL

There are 14 “unknown” servers left over. You might be thinking, “hey, this is pretty good already” but not quite. Some of the URLS actually get redirected.

4. Realize http headers do not provide the enough of the information syscall(cat) wanted, goto 1;

New process

I initially thought I could use nmap and it’s OS detection to figure out what operating systems the servers are running. This information is more interesting to me than (^-.-^) because he did not explicitly state that he wanted to know the server operating systems, only the server web stacks. Typically, running nmap against systems you don’t own is frowned upon and considered a declaration of war.

I used chose to use whatweb because it does not probe every port. Instead, it looks what the http server advertises, the contents of html files, and how the http daemon responds.

1. Scan

I told whatweb to output using the sql format and loaded it into mariadb so it would be somewhat parsable.

0x19# whatweb --log-sql-create=dbinit.sql

0x19# whatweb -U="Mozilla/5.0 (X11; Ubuntu; Linux x86_64; rv:124.0) Gecko/20100101 Firefox/124.0" --input-file=sites.txt --log-sql=out.sql

0x19# mysql < dbinit.sql

0x19# mysql < out.sql

2. Process, Clean, Process again

Neither of the sql files loaded into the database without manual modifications. I processed the records in database using a different PHP script that I will not include here because it only gets you halfway to something useful. I did the remainder of the processing using various shell commands to transform garbage into a series of newline delimited key:value pairs. The key is the name of the whatweb module used, the value is the string+version+os fields from the database. I dumped this into a text file which I have included. Emails and IP addresses have been removed.

the text file with data in the key:value format

Using further combinations of grep, awk, cut, sed, sort, and uniq, I tabulated the data into something that I could copy and paste into libreoffice calc. Although it would be fun to do it in R or Gnuplot, spreadsheets are designed to be used by tech illiterate office drones. I was feeling braindead after manipulating garbage so I am no better.

Analysis

These numbers are slightly different than the numbers from the first section because redirects were followed. The total number of servers is 140 (certain http to https redirects and path redirects are logged as if they are separate servers for some reason).

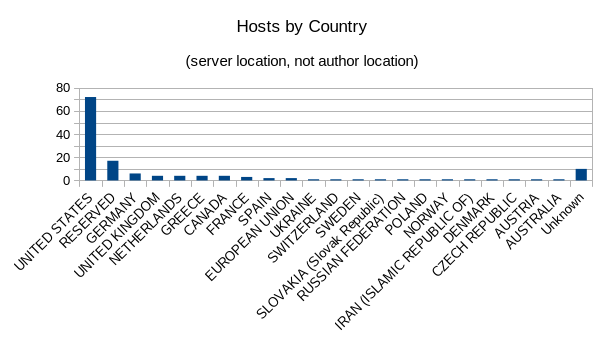

Servers by the country they are hosted in:

The RESERVED servers all had the country code of ZZ which is often used to denote Unknown or unspecified country. The Unknown servers did not present a country code.

72 UNITED STATES

17 RESERVED

6 GERMANY

4 UNITED KINGDOM

4 NETHERLANDS

4 GREECE

4 CANADA

3 FRANCE

2 SPAIN

2 EUROPEAN UNION

1 UKRAINE

1 SWITZERLAND

1 SWEDEN

1 SLOVAKIA (Slovak Republic)

1 RUSSIAN FEDERATION

1 POLAND

1 NORWAY

1 IRAN (ISLAMIC REPUBLIC OF)

1 DENMARK

1 CZECH REPUBLIC

1 AUSTRIA

1 AUSTRALIA

10 Unknown

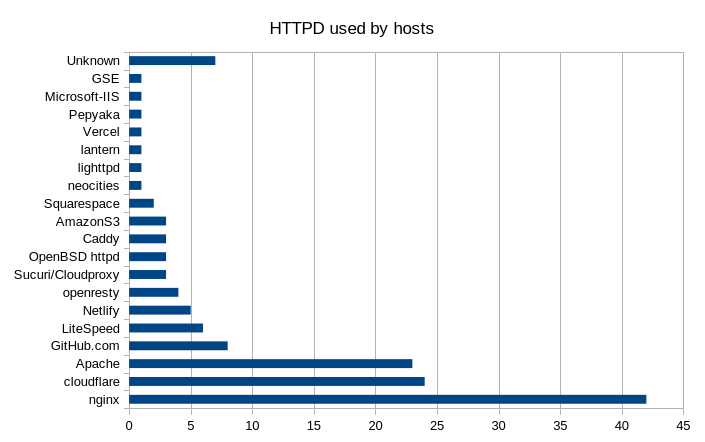

Servers by the httpd they run

The servers reported different versions or slightly mangled names. I condensed them down into something without version numbers. Cloudflare and related CDNs will usually present and advertise themselves through the Server: http header, pretending to be a real httpd daemon and not software as a service in search of a solution.

42 nginx

24 cloudflare

23 Apache

8 GitHub.com

6 LiteSpeed

5 Netlify

4 openresty

3 Sucuri/Cloudproxy

3 OpenBSD httpd

3 Caddy

3 AmazonS3

2 Squarespace

1 neocities

1 lighttpd

1 lantern

1 Vercel

1 Pepyaka

1 Microsoft-IIS

1 GSE

7 Unknown

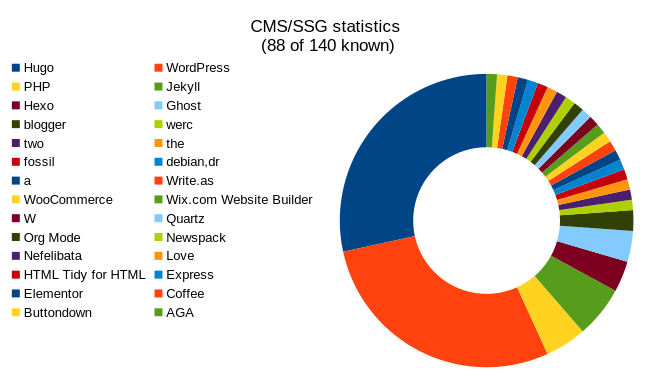

Servers by CMS/SSG stack:

Again, all of the records say something slightly different. Sites that reported a wordpress theme as their CMS got condensed into general ‘wordpress’. Hard and soft forks of wordpress were not condensed into general ‘wordpress’. Hugo, Jekyll, and PHP all had a version number attached which I truncated. Some websites reported that they are powered by a random string or character. I left these alone because it is somewhat humorous to realize that (^-.-^) subscribes to not only a wix website but also multiple webservers where the admin actually changed the “powered by” http headers and HTML metadata to garbage.

52 servers have an unknown CMS/SSG which can either mean “the admin turned off the skiddie bait” or that the software is custom. Additionally, server reporting just PHP are either running something custom or leaking php versions because the badmin didn’t rtfm.

25 Hugo

25 WordPress

4 PHP

5 Jekyll

3 Hexo

3 Ghost

2 blogger

1 werc

1 two

1 the

1 fossil

1 debian,dr

1 a

1 Write.as

1 WooCommerce

1 Wix.com Website Builder

1 W

1 Quartz

1 Org Mode

1 Newspack

1 Nefelibata

1 Love

1 HTML Tidy for HTML

1 Express

1 Elementor

1 Coffee

1 Buttondown

1 AGA

52 Unknown

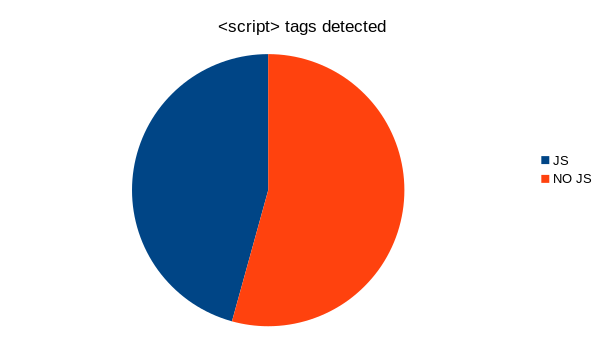

JavaScript usage

If a <script> tag was detected on a website, it was counted. This is probably not very accurate but it was interesting nonetheless.

64 JS

76 No JS

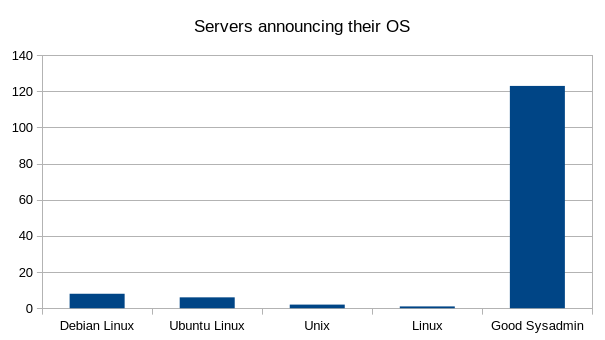

Servers reporting their OS (public shaming section)

Most servers did not announce their OS. Either this means good defaults or good admins.

Debianoid admins will never learn.

8 Debian Linux

6 Ubuntu Linux

2 Unix

1 Linux

123 Good Sysadmin/defaults

Monero:

Monero:  Bitcoin:

Bitcoin:  http://ilsstfnqt4vpykd2bqc7ntxf2tqupqzi6d5zmk767qtingw2vp2hawyd.onion:8080

http://ilsstfnqt4vpykd2bqc7ntxf2tqupqzi6d5zmk767qtingw2vp2hawyd.onion:8080 http://xzh77mcyknkkghfqpwgzosukbshxq3nwwe2cg3dtla7oqoaqknia.b32.i2p:9090

http://xzh77mcyknkkghfqpwgzosukbshxq3nwwe2cg3dtla7oqoaqknia.b32.i2p:9090  |

|